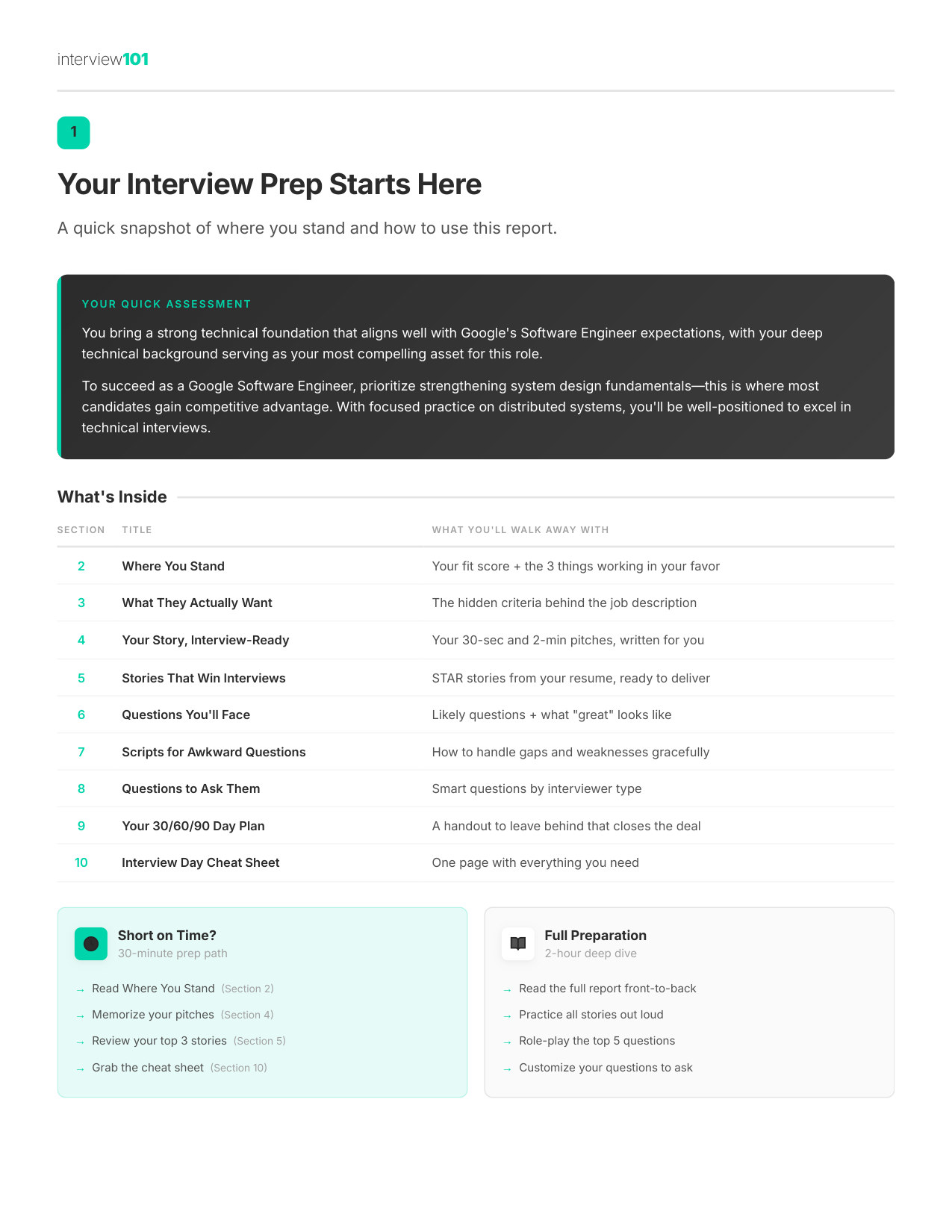

Google tests both theoretical ML understanding and practical implementation skills, with particular emphasis on production ML systems and modern GenAI capabilities. You need to demonstrate deep knowledge of ML fundamentals while also showing you can build scalable, reliable ML systems that serve real users. GenAI literacy means understanding transformer architectures, fine-tuning strategies, evaluation challenges, and responsible deployment practices for large language models.

How to Demonstrate: When discussing ML approaches, always connect them to production constraints like serving latency, data drift monitoring, and A/B testing frameworks. For GenAI questions, go beyond basic concepts to discuss practical challenges like prompt engineering, retrieval-augmented generation, and managing hallucinations in production systems. Write clean, efficient code during coding rounds and naturally consider error handling, edge cases, and scalability. Demonstrate familiarity with modern ML infrastructure patterns like feature stores, model versioning, and continuous training pipelines, showing you understand the full ML lifecycle beyond just model development.